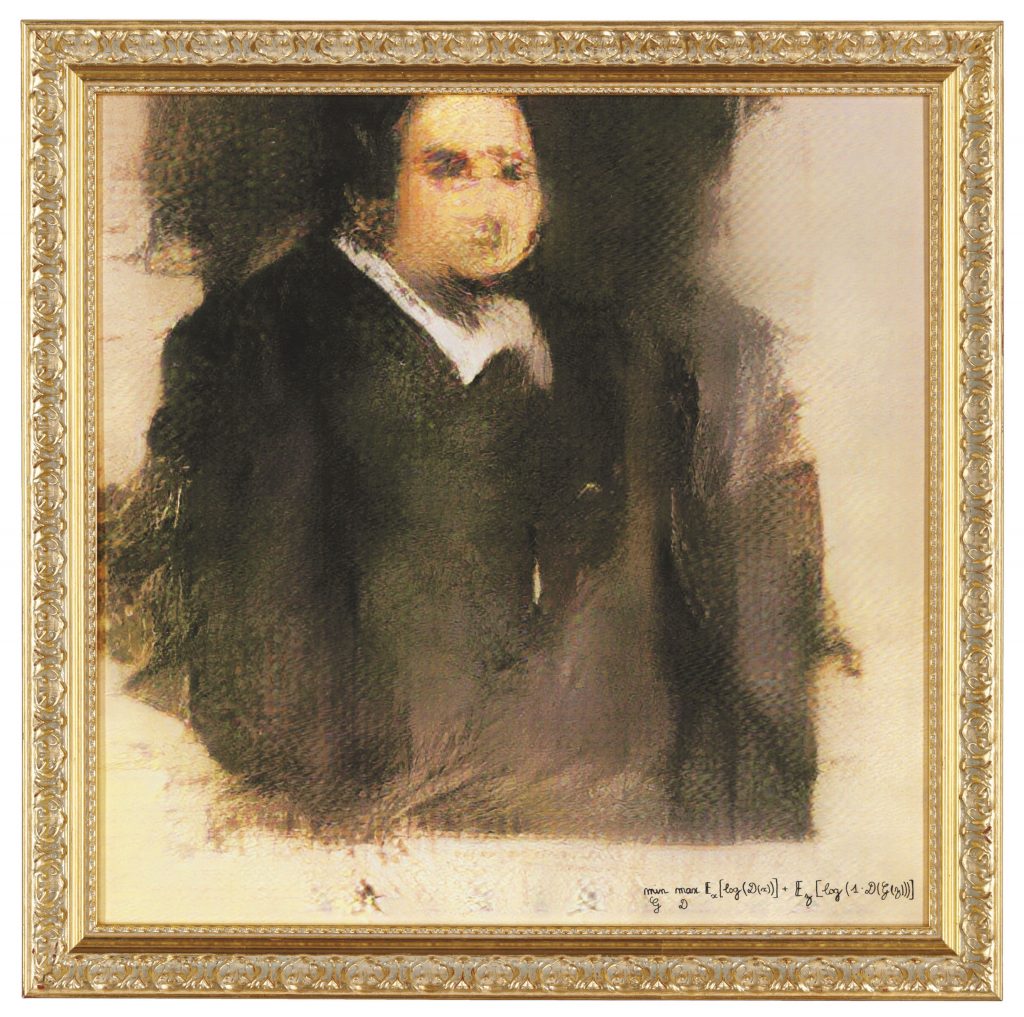

On october 25, 2018, the renowned auction house Christie’s put up for sale a work made with an Artificial Intelligence (AI) program. Portrait of Edmond Belamy ended up being auctioned for $ 432,500 (approximately R$ 2.5 million) – about 45 times its estimated value. In place of the artist’s name, however, the blurred portrait was signed with the equation used to generate it. This fact was also used by Christie’s to increase the murmur about their own auction; in a text published by the house it was reported: “This portrait is not the product of a human mind”. However, the formula used by the AI to generate Portrait of Edmond Belamy was created by the human minds that integrate the Parisian art collective Obvious. Regardless, the work was the first to use an AI program to go under the hammer at a large auction house, attracting significant media attention and some speculation about what Artificial Intelligence means for the future of art.

For the past 50 years, artists have used AI to create, marks Ahmed Elgammal, a doctoral professor in the Department of Computer Science at Rutgers University. According to Elgammal, one of the most prominent examples of this is the work of AARON, the program written by Harold Cohen; another is the case of Lillian Schwartz, a pioneer in the use of computer graphics in art, who also experimented with AI. What, then, sparked the speculations mentioned above about Portrait of Edmond Belamy? “The auctioned work at Christie’s is part of a new wave of AI art that has appeared in recent years. Traditionally, artists who use computers to generate art need to write detailed code that specifies the ‘rules for the desired aesthetic’”, explains Elgammal. “In contrast, what characterizes this new wave is that the algorithms are set up by the artists to ‘learn’ the aesthetics by looking at many images using machine-learning technology. The algorithm then generates new images that follow the aesthetics it had learned”, he adds. The most used tool for this is GANS, an acronym for Generative Adversarial Networks, introduced by Ian Goodfellow in 2014. In the case of Portrait of Edmond Belamy, the collective Obvious used a database of fifteen thousand portraits painted between the 14th and 20th centuries. From this collection, the algorithm fails in making correct imitations of the pre-curated input, and instead generates distorted images, notes the professor.

“It’s entirely plausible that AI will become more common in art as the technology becomes more widely available”, says art critic and former Frieze editor, Dan Fox, in an interview for arte!brasileiros. “Most likely, AI will simply co-exist alongside painting, video, sculpture, performance, sound and whatever else artists want to use”, he adds. Fox also points out that we must not forget that “the average artist, at the moment, isn’t able to afford access to this technology. Most can barely afford their rent and bills. This world of auction prices is so utterly divorced from the average artist’s life right now that I think you have to acknowledge that whoever is currently working with AI is coming from a position of economic power or access to research institutions”. While enthusiasm for Portrait of Edmond Belamy may be lulled by motives for progress and yearning for “the future” and innovation, the art critic indicates that, behind the smoke and mirrors, in the end, “AI will be of interest to the art industry if human beings can make money from it”.

Can a robot be creative?

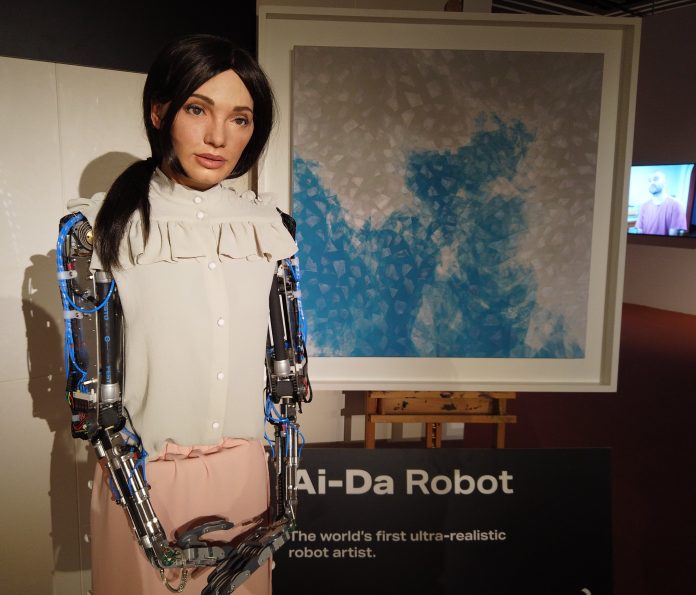

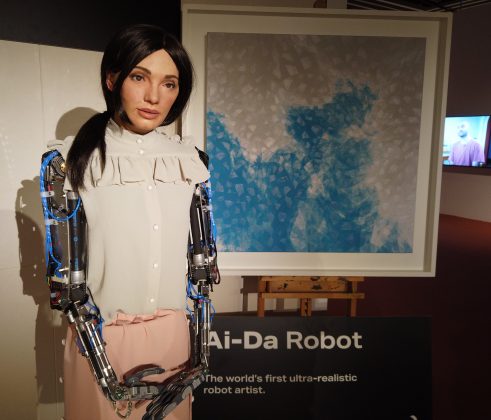

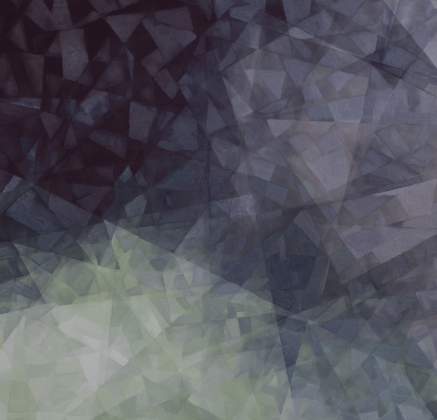

In the year following the sale of Christie’s, Ai-Da was completed. Named after Ada Lovelace – an English mathematician recognized for having written the first algorithm to be processed by a machine – she describes herself as “the world’s first ultra-realistic robot artist with Artificial Intelligence”. Ai-Da explains that she draws using the cameras implanted in her eyes, in collaboration with humans, she paints and sculpts, and also makes performances (check here). “I am a contemporary artist and I am contemporary art at the same time”, acknowledges Ai-Da, only to later pose the question that her audience should already be asking: “How can a robot be an artist?”. Although the question may seem intricate at first, there is another level of this issue that is more challenging: “Can a robot be creative?”

Still in 2003, author and scientific journalist Matthew Hutson explored the topic in his master’s thesis at the Massachusetts Institute of Technology (MIT). In Artificial Intelligence and Musical Creativity: Computing Beethoven’s Tenth, he argues that “computers simulate human behavior using shortcuts. They may appear human on the outside (writing jokes, fugues, or poems) but they work differently under the hood. The facades are props, not backed up by real understanding. They use patterns of arrangements of words and notes and lines. But they find these patterns using statistics and cannot explain why they are there”. Hutson lists three main reasons for this: “First, computers work with different hardware than the human brain. Mushy brains full of neurons and flat silicon wafers packed with transistors will never behave the same and can never run the same software. Second, we humans don’t understand ourselves well enough to translate our software to another piece of hardware. Third, computers are disembodied, and understanding requires living physically in the world”. On the latter topic, he ponders that particular qualities of human intelligence result directly from the particular physical structure of our brains and bodies. “We live in an analog (continuous, infinitely detailed) reality, but computers use digital information made up of finite numbers of ones and zeroes”.

When asked whether the 2003 thesis holds up after almost two decades, Hutson responds to arte!brasileiros that even today he wouldn’t necessarily describe AI’s current artistic outputs as creative, even if it’s visually or semantically interesting, because it doesn’t understand that it’s making art or expressing something deeper. As Seth Lloyd, professor of mechanical and physical engineering at MIT, would put it, “raw information-processing power does not mean sophisticated information-processing power”. Philosopher Daniel C. Dennett explains that “these machines do not (yet) have the goals or strategies or capacities for self-criticism and innovation to permit them to transcend their databases by reflectively thinking about their own thinking and their own goals”. However, Hutson reiterates, “these may be human-centric concepts. AI may evolve to be just as creative as humans but in a completely different way, such that we wouldn’t recognize its creativity, nor it ours”.

Can culture lose jobs to AI?

“Nowadays, when cars and refrigerators are jammed with microprocessors and much of human society revolves around computers and cell phones connected by the Internet, it seems prosaic to emphasize the centrality of information, computation, and communication”, denotes Lloyd in an article for Slate. We have reached a point of no return, and for the next century, the question about the creativity of machines is just one of many uncertainties regarding technology. More palpable, for now, is the possible unemployment crisis triggered by the advances in AI along with robotics.

As an example, a 2013 study conducted by researchers at the University of Oxford found out that nearly half of all jobs in the U.S. were at risk of being fully automated in the next two decades. On a global scale, by 2030, at least twenty million manufacturing jobs can be replaced by robots, according to a more recent analysis by Oxford Economics. This 2019 analysis also warns of the greater risk of repetitive and/or mechanical work opportunities – “where robots can carry out tasks more rapidly than humans” – being eliminated, while jobs that require more “compassion, creativity, and social intelligence” are more likely to continue to be performed by humans. As the art world is not only composed of curators and collectors, it needs to be concerned as well. Earlier this year, during the pandemic, Tim Schneider, market editor for Artnet, warned of this: “What happens when you combine mass layoffs, a keenness to minimize in-person interactions for health reasons, and tech entrepreneurs’ willingness to heavily discount their devices so they can secure potentially lucrative proof of concept in the cultural sector?”

Bringing the qualitative optic to the quantitative one presented by Oxford Economics, the historian and philosopher Yuval Noah Harari would add to the equation the nature of the work and its specialization: “Say you shift back most production from Honduras or Bangladesh to the US and to Germany because the human salaries are no longer a part of the equation, and it’s cheaper to produce the shirt in California than it is in Honduras, so what will the people there do? And you can say, ‘OK, but there will be many more jobs for software engineers’. But we are not teaching the kids in Honduras to be software engineers”.

Agents or tools? AI and ethics

Estimates related to automation appear to be more reasonable. Beyond them, it is difficult to have a clear picture for the future of AI, whether in terms of creativity or consciousness. “Technological prediction is particularly chancy, given that technologies progress by a series of refinements, are halted by obstacles and overcome by innovation”, says Lloyd. “Many obstacles and some innovations can be anticipated, but more cannot”.

For Dennett, in the long run, “strong AI”, or general artificial intelligence, is possible in principle but not desirable. “The far more constrained AI that’s practically possible today is not necessarily evil. But it poses its own set of dangers”, he warns. According to the philosopher, we do not need artificial conscious agents – to what he refers to as “strong AI” – because there is “a surfeit of natural conscious agents, enough to handle whatever tasks should be reserved for such ‘special and privileged entities’”; on the contrary, we would rather need intelligent tools.

As a justification for not making artificial conscious agents, Dennett considers that “however autonomous they might become (and in principle they can be as autonomous, as self-enhancing or self-creating, as any person), they would not—without special provision, which might be waived—share with us natural conscious agents our vulnerability or our mortality”. In his statement, he echoes the writings of the father of cybernetics, Norbert Wiener, who, cautiously, reiterated: “The machine like the djinnee, which can learn and can make decisions on the basis of its learning, will in no way be obliged to make such decisions as we should have made, or will be acceptable to us”.

Regarding the ethical development of AI, the co-director of the Stanford University’s Human-Centered AI Institute, Fei-Fei Li, states that it is necessary to welcome multidisciplinary studies of AI, in a cross-pollination with economics, ethics, law, philosophy, history, cognitive sciences and so on, “because there is so much more we need to understand in terms of AI’s social, human, anthropological and ethical impact”. Still in the academic field, Hutson suggests that “conferences and journals could guide what gets published, by taking a technology’s broader impact into account during peer review, and requiring submissions to address ethical concerns”. Alongside, he points out, funding agencies and internal review boards at universities and corporations could step in to shape research at its nascent stage. At the stage following the publication of scientific findings, “regulations could ensure that companies don’t sell harmful products and services, and laws or treaties could ensure that governments don’t deploy them”.

*Modifications were made to the article for clarity.

Detalhes

O MASP — Museu de Arte de São Paulo Assis Chateaubriand exibe Claudia Alarcón & Silät: viver tecendo. A mostra reúne 25 trabalhos que contemplam a produção artística de Claudia

Detalhes

O MASP — Museu de Arte de São Paulo Assis Chateaubriand exibe Claudia Alarcón & Silät: viver tecendo. A mostra reúne 25 trabalhos que contemplam a produção artística de Claudia Alarcón (La Puntana, Argentina, 1989) & Silät, coletivo formado por mais de cem tecedeiras do povo Wichí. Com curadoria de Adriano Pedrosa, diretor artístico, MASP, e Laura Cosendey, curadora assistente, MASP, a exposição marca a estreia da artista e do grupo em um museu brasileiro.

As obras são produzidas com fios de chaguar, uma bromélia de fibras resilientes nativa do clima semiárido do Gran Chaco, maior bioma da América Latina depois da Amazônia, que ocupa as regiões norte e nordeste da Argentina, chegando até o Paraguai. A preparação do chaguar e a técnica de entrelaçar os fios com as mãos, sem o uso de um tear, provêm da confecção das bolsas yicas, objeto central para a cultura wichí. Tradicionalmente, a yica tem formato quadrado, com padrões geométricos que representam a flora e a fauna de seu território, remetendo a temas como orelhas de tatu, olhos de coruja e cascos de tartaruga. Embora seja o ponto de partida do trabalho de Alarcón & Silät, suas obras transcendem esse repertório tradicional. A partir de oficinas que propunham pensar novos formatos para as bolsas yicas, o coletivo Silät se organizou em 2023, passando a produzir tecidos dentro do contexto artístico.

Historicamente, os têxteis produzidos pelos Wichí tinham tons terrosos, avermelhados e azuis acinzentados, mas as artistas passaram a adicionar cores mais intensas com anilinas no processo de preparação dos fios, chegando a matizes exuberantes de tons laranja e fúcsia, por exemplo. Outra importante inovação do trabalho de Alarcón & Silät está no próprio processo de produção dos tecidos: enquanto tradicionalmente as mulheres sempre teceram individualmente, as integrantes do Silät desenvolveram métodos para que várias integrantes pudessem trabalhar simultaneamente em uma mesma peça ou dar continuidade ao trabalho de outra tecedeira.

A mitologia do povo Wichí também compõe os trabalhos de Alarcón & Silät. Em Kates tsinhay — Mujeres estrellas [Mulheres-estrelas], 2023, Claudia Alarcón evoca o mito das mulheres-estrelas. A crença narra que as mulheres eram estrelas no céu e desciam à Terra todas as noites por fios de chaguar que elas mesmas haviam tecido. Vinham se alimentar, roubando os peixes que os homens pescavam. Quando os homens descobriram, cortaram esses fios e as mulheres ficaram na Terra. Essa obra e outras inspiradas por esse enredo simbólico mesclam as geometrias ancestrais com elementos figurativos para delinear estrelas, luas, astros e céus estrelados.

“Recupero lendas e histórias do nosso povo, sinto que tem muito trabalho a ser revivido. Penso em como recuperar isso, porque é algo que talvez não possa ser dito oralmente, não podemos gritar isso. Mas o tecido também fala. Há quem possa entender ou sentir isso no tecido. Eu me dei conta de que, embora teçamos em silêncio, tudo está dito no tecido”, comenta Alarcón.

Os wichís chamam seu território de tayhi e o consideram parte fundamental da identidade, tendo uma dimensão espiritual e simbólica. Em espanhol, o nome para a região é monte. Porém, ainda que o nome remeta a montanhas, o relevo local é majoritariamente plano. A experiência cotidiana, o vento, o dia, o entardecer, a noite, as constelações e muitos outros elementos da vivência no monte estão presentes nas cores, formas orgânicas e geométricas dos trabalhos de Alarcón & Silät. O olhar sensível das tecedeiras para os ciclos naturais retrata na abstração Kyelhkyup — El otoño [Outono], 2023, da coleção do MASP, as mudanças de tons, texturas e luz durante a passagem das estações no monte.

Tecer em conjunto, somado às inovações implementadas, possibilitou a elaboração de composições têxteis que trazem uma multiplicidade de vozes e cores, articulando padrões tradicionais com um repertório visual e poético contemporâneo. “Os tecidos tornaram-se bandeiras de luta, estandartes que portam mensagens, histórias, e dão vozes às mulheres da comunidade”, afirma Laura Cosendey.

Tanto a singularidade das artistas quanto a dimensão do coletivo são demonstradas na instalação Hilulis ta llhaiematwek — Un coro de yicas [Um coro de yicas] (2024-25), que reúne mais de cem bolsas, cada uma delas produzida por uma integrante do grupo. As escolhas pessoais de cor e padrão são destacadas quando os trabalhos são exibidos lado a lado, enquanto a apresentação em conjunto reforça o caráter político da articulação do coletivo, que possibilitou criticar questões como a desvalorização do saber ancestral e a precarização do trabalho das tecedeiras.

Na exposição, as obras são apresentadas em molduras ou em estruturas verticais de madeira, que remetem à maneira como esses tecidos são produzidos e, ocasionalmente, apresentados na comunidade onde vivem as tecedeiras. O conjunto N’äyhay wet layikis — Caminos y cicatrizes [Caminhos e cicatrizes] é um dos trabalhos exibidos nesse suporte expográfico proposto pelo MASP. A composição têxtil foi pensada pelo coletivo, em 2025, para o Nove de Julho, dia em que se comemora a independência da Argentina. A criação artística foi tecida pelas mulheres para denunciar a repressão violenta cometida ao longo do tempo pelo Estado argentino contra populações indígenas.

Claudia Alarcón & Silät: viver tecendo integra a programação anual do MASP dedicada às Histórias latino-americanas. A agenda do ano também inclui mostras de La Chola Poblete, Sandra Gamarra Heshiki, Santiago Yahuarcani, Colectivo Acciones de Arte, Damián Ortega, Sol Calero, Carolina Caycedo, Pablo Delano, Rosa Elena Curruchich, Manuel Herreros e Mateo Manaure, Jesús Soto e uma exposição coletiva internacional.

Serviço

Exposição | Claudia Alarcón & Silät: viver tecendo

De 06 de março a 02 de agosto

Terças grátis, das 10h às 20h (entrada até as 19h); quarta e quinta das 10h às 18h (entrada até as 17h); sexta das 10h às 21h (entrada gratuita das 18h às 20h30); sábado e domingo, das 10h às 18h (entrada até as 17h); fechado às segundas.

Agendamento on-line obrigatório pelo link masp.org.br/ingressos

Período

Local

MASP

Avenida Paulista, 1578, São Paulo

Detalhes

A DAN Galeria inaugura a exposição Máscaras, Ivald Granato – Quem é você?, com curadoria de Maria Alice Milliet. A mostra inédita reúne um conjunto de pinturas realizadas por Granato

Detalhes

A DAN Galeria inaugura a exposição Máscaras, Ivald Granato – Quem é você?, com curadoria de Maria Alice Milliet. A mostra inédita reúne um conjunto de pinturas realizadas por Granato no fim da década de 1990 e as coloca em diálogo com máscaras africanas preservadas nas coleções de Christian-Jack Heymès e da família Mastrobuono. A abertura acontece em São Paulo e se conecta à agenda da 22º edição da SP-Arte.

A exposição parte de um dado central para a leitura da trajetória de Ivald Granato. Durante décadas, sua presença pública, suas ações performáticas e sua energia irreverente ocuparam lugar decisivo na recepção de sua obra. Esse aspecto é incontornável, mas não a resume.

Granato foi também um pintor de grande domínio técnico, um desenhista excepcional e um conhecedor profundo da história da arte. Transitava entre linguagens e repertórios com intimidade rara, não para repetir estilos, mas para tensioná-los a partir de uma inteligência visual muito própria. Maria Alice Milliet lembra que, ao chegar à maturidade, depois de mais de três décadas de exposições, premiações e reconhecimento, Granato já ocupava um lugar de destaque na cena artística brasileira. Talentoso desenhista e pintor, havia atravessado os “ismos” e a Pop Art em estreita sintonia com seu tempo.

Essa mostra ajuda a recolocar esse ponto em evidência, situando-o como parte de uma investigação consistente, em que pintura, memória, teatralidade e identidade se entrelaçam. No fim dos anos 1990, Granato se afasta, por um momento, do embate mais imediato com a contemporaneidade e volta o olhar para dimensões profundas de sua própria formação. É desse movimento que nasce a série ligada às máscaras. Segundo a curadora, essa passagem corresponde a uma inflexão em sua carreira, quando o artista procura valores ligados ao passado, à ancestralidade e à memória cultural brasileira. Em 1998, Granato realiza uma série de pinturas sobre papel chamada The Mask. Na sequência, desenvolve obras de maior fôlego reunidas sob o título Quem é você – The Mask. Para o artista, essas máscaras eram anotações visuais de rostos que povoavam seu imaginário.

Ao tratar dessa produção, Maria Alice Milliet desloca a leitura habitual que costuma aproximar esse tipo de repertório apenas da tradição europeia do moderno. No caso de Granato, ela está ligada à busca de raízes culturais e ao desejo de afirmação identitária. A curadora recupera sua origem miscigenada, com ascendência negra e indígena, e inscreve essa série num campo de pertencimento, reconhecimento simbólico e reverência, marcado por uma aproximação que nasce de dentro. Esse aspecto é decisivo para a compreensão da mostra. A ancestralidade africana aparece como força estrutural na cultura brasileira e como chave para reler uma parte importante de sua obra.

Milliet observa que, depois de uma primeira incursão em figuras mais próximas de um universo popular e carnavalesco, Granato volta-se para as máscaras tribais. Na série cuja pergunta organiza o título da exposição, vemos uma sucessão de caras estranhas emergirem de fundos escuros, em composições que condensam intensidade gráfica, energia cromática e forte carga simbólica. A representação tem, nesse conjunto, um peso particular. Ela é figura de passagem, condensação de gesto, invenção de persona e presença ritual. Dez anos após sua morte, Máscaras, Ivald Granato – Quem é você? nos faz compreender com mais nitidez a complexidade do artista.

Serviço

Exposição | Máscaras, Ivald Granato – Quem é você?

De 28 de março a 25 de junho

Segunda-feira, terça-feira, quarta-feira, quinta-feira, sexta-feira das 10:00h às 19:00h, sábados das 10h às 13h

Período

Local

DAN Galeria

Rua Estados Unidos, 1638 01427-002 São Paulo - SP

Detalhes

A Casa de Cultura do Parque apresenta a exposição “Badauê”, de Andrea Brazil (Gabinete), como parte de seu I Ciclo Expositivo. Com curadoria de Claudio Cretti, diretor artístico da Casa,

Detalhes

A Casa de Cultura do Parque apresenta a exposição “Badauê”, de Andrea Brazil (Gabinete), como parte de seu I Ciclo Expositivo. Com curadoria de Claudio Cretti, diretor artístico da Casa, e texto de Ana Avelar, a mostra reúne trabalhos marcados pela geometrização e a reconfiguração visual da arquitetura vernacular.

A trajetória de Andrea Brazil (São Paulo, 1972) entre Salvador e a Ilha de Itaparica, no Recôncavo Baiano, fundamenta sua visualidade funcional. A artista cresceu em contato com uma arquitetura litorânea marcada por intervenções anônimas – feitas com cacos de telha e sobras de material – que configuram o que ela chama de “desenho no espaço”.

Nesse processo, fachadas de casas e estabelecimentos, grades e outros elementos urbanos, se reorganizam como linhas, cores e vazios. “Trata-se de uma produção coletiva e vernacular que condensa história, clima, técnica e desejo estético em um mesmo gesto construtivo”, afirma Avelar.

O olhar de Brazil para a arquitetura vernacular de sua infância se aprofundou durante uma viagem à região do Algarve, em Portugal. Lá, a influência moura, reconhecível nos cantos arredondados e padrões geométricos, ressoou com o que a artista conhecia da Bahia.

Para Avelar, a conexão não é casual: Brazil observa que os negros malês, protagonistas da revolta de 1835 em Salvador, eram em sua maioria de origem muçulmana e portadores de uma tradição visual que se infiltrou na cultura material da cidade. O ornamento, nesse sentido, guarda estratos históricos que a superfície das fachadas não revela explicitamente.

Uma das séries apresentadas é construída sobre chapas de madeira com camadas de massa corrida sobrepostas em duas etapas. O desenho das grades — fruto de uma memória visual internalizada — é entalhado na superfície até revelar a cor subjacente. Quando o trabalho aposta na cor, destaca-se pela vibração óptica, transitando entre a estridência da Pop Art e o silêncio das superfícies opacas.

Além da individual de Brazil, o I Ciclo Expositivo inclui a mostra coletiva “O horror, o humor e o absurdo” (Galeria do Parque) e “Calendário”, de Felipe Rezende (Projeto 280X1020). O ciclo transita no limiar entre o real e o imaginário e articula a fabulação como instrumento indispensável para subverter as atuais configurações de mundo. Claudio Cretti afirma que este ciclo busca, através de diferentes linguagens, “tensionar os limites entre o concebível e o inconcebível, ressaltando o potencial da ficção para se pensar criticamente a realidade”.

Por fim, o programa de Performances será aberto no dia 28 de março de 2026, às 17h, pela performer e dançarina Maria Noujaim, com “Lago”. Através da transposição da mitologia em movimento, a artista explora os hibridismos entre animal e humano, tomando como ponto de partida o mito grego de Leda e o Cisne.

O I Ciclo Expositivo tem curadoria de Claudio Cretti e é uma idealização do Instituto de Cultura Contemporânea (ICCo) e foi realizado com recursos da Lei Rouanet, Ministério da Cultura, com patrocínio do banco BV, Laranjinha e Banco Itaú.

Serviço

Exposição | Badauê

De 28 de março a 28 de junho

Quarta a domingo, das 11h às 18h

Período

Local

Casa de Cultura do Parque

Av. Prof. Fonseca Rodrigues, 1300 - Alto de Pinheiros, São Paulo - SP, 05461-010

Detalhes

Com curadoria de Ayrson Heráclito e Rodrigo Moura, a exposição Mestre Didi – invenção e ancestralidade na arte afro-brasileira, no Itaú Cultural, engloba todo o percurso do sacerdote-artista, evidenciando sua importância tanto

Detalhes

Com curadoria de Ayrson Heráclito e Rodrigo Moura, a exposição Mestre Didi – invenção e ancestralidade na arte afro-brasileira, no Itaú Cultural, engloba todo o percurso do sacerdote-artista, evidenciando sua importância tanto para o alargamento dos horizontes do mundo das artes quanto para a evolução da luta contra o racismo.

Em sua trajetória, o baiano Mestre Didi (1917-2013) percorreu e distendeu os domínios das artes e da religiosidade. As muitas habilidades artísticas e artesanais de Didi lhe conferiram o lugar de mestre, assim como sua vocação e sua atuação religiosa o colocaram em cargos de destaque nos cultos. E é esse itinerário que ganha o Itaú Cultural (IC) no período de 8 de abril a 5 de julho de 2026.

Além das criações de Didi no campo das artes visuais, a individual aborda sua produção textual/literária e sua participação no desenvolvimento de relevantes organizações e eventos, no Brasil e em outros países, voltados para a pesquisa e a promoção das culturas africanas e afrodiaspóricas. O espaço expositivo também explora as conexões entre Didi e outros criadores, como o seu contemporâneo Abdias Nascimento – tema de mostra do projeto Ocupação Itaú Cultural em 2016.

No dia 7 de abril, às 19h, acontece a abertura de Mestre Didi – invenção e ancestralidade na arte afro-brasileira. Para celebrar esse momento, o terreiro Ilê Asipá apresenta Oro Ojés, cerimônia tradicional realizada em todas as festividades do espaço fundado pelo próprio Didi, em Salvador.

Serviço

Exposição | Mestre Didi – invenção e ancestralidade na arte afro-brasileira

De 8 de abril a 5 de julho

Terça a sábado, das 11h às 20h, domingos e feriados, das 11h às 19h

Pisos 1, -1 e -2

Período

Local

Itaú Cultural

Avenida Paulista, 149, Sâo Paulo - SP

Detalhes

Maxwell Alexandre transforma completamente o espaço da Almeida & Dale Fradique em Pintor preto, figuração branca., sua primeira individual na galeria. A exposição resulta de desdobramentos conceituais e plásticos de dois

Detalhes

Maxwell Alexandre transforma completamente o espaço da Almeida & Dale Fradique em Pintor preto, figuração branca., sua primeira individual na galeria. A exposição resulta de desdobramentos conceituais e plásticos de dois conjuntos de trabalho célebres: Clube, apresentado pela primeira vez no Museu Histórico da Cidade, no Rio de Janeiro (2024), e Cubo Branco, conhecido pela obra Galeria n.2, concebida para a 36ª Bienal de São Paulo (2025).

O início da série Clube se deu em 2020, quando Maxwell passou a frequentar o Clube de Regatas do Flamengo, na Gávea, bairro nobre do Rio de Janeiro e vizinho à Rocinha, favela onde o artista nasceu e cresceu. Foi nos pátios do clube que Maxwell voltou sua observação aos corpos brancos, criando um ponto de inflexão em sua obra — até então marcada pela representação exclusiva de pessoas pretas — que culmina na elaboração do conceito de “figuração branca”, marco dessa nova exposição.

Os altos muros do clube oferecem a seus membros e frequentadores um “oásis” — aparentemente apartado das contradições e complexidades do entorno, mas que, ao mesmo tempo, explicita as estruturas de poder presentes em cenas pacíficas de banhos de Sol e brincadeiras em piscinas.

O clube torna-se metáfora na obra de Maxwell para abordar todos os espaços “de bem-estar, lazer, fartura, segurança, tranquilidade, boa arquitetura, bom design, boa arte, boa comida”, como o artista escreve; e o gênero pictórico “figuração branca” passa a ser uma operação conceitual para destacar a branquitude no campo da pintura e da história da arte. Maxwell destaca: “Se existe figuração preta, há de haver uma figuração branca”.

Com esse movimento, Maxwell Alexandre aborda o próprio papel como artista. Tendo conquistado reconhecimento internacional com as séries Pardo é Papel e Novo Poder, nas quais retratava, respectivamente, cenas cotidianas da favela e pessoas pretas em espaços de poder, com esses novos trabalhos Maxwell desloca os corpos brancos do local de neutralidade, pondo em questão uma relação de séculos da história da arte entre o pintor preto e o objeto da pintura, a figuração branca.

A série Clube desnaturaliza a representação do homem branco em pintura, estabelecendo uma nova configuração na relação de alteridade das imagens. E é por meio da suposta neutralidade do Cubo Branco que Maxwell nos aponta certos valores centrais dentro do sistema das artes.

Ninguém chama a representação do homem branco de figuração branca. Todo mundo conhece a representação do homem branco, em pintura, apenas como figuração. O gênero mais exaurido e canonizado da história da arte é neutro, ainda não recebeu uma classificação. Uma vez que a representação do homem branco é entendida como o avatar da humanidade, ela não poderia ter sido classificada e racializada.

Maxwell Alexandre

Serviço

Exposição | Pintor preto, figuração branca

De 11 de abril a 30 de maio

Segunda a sexta-feira, das: 10h às 19h, sábado, das 11h às 16h

Período

Local

Almeida & Dale Fradique

Rua Fradique Coutinho 1360 | 1430, São Paulo - SP

Detalhes

Peter Halley apresenta uma nova exposição na Almeida & Dale Fradique. The American Connection, primeira exposição do estadunidense no Brasil em cinco anos, apresenta um conjunto de pinturas recentes, com suas

Detalhes

Peter Halley apresenta uma nova exposição na Almeida & Dale Fradique. The American Connection, primeira exposição do estadunidense no Brasil em cinco anos, apresenta um conjunto de pinturas recentes, com suas icônicas cores fluorescentes e estruturas de células que expandem o quadro.

Halley despontou como um dos principais nomes do pós-conceitualismo na década de 1980, e é reconhecido por suas pinturas geométricas fluorescentes que enfatizam cores e sistemas. Suas obras empregam a linguagem da abstração geométrica para explorar a organização do espaço social na era digital.

“As obras da exposição The American Connection assumem a representação metafórica dos dispositivos eletrônicos ligados por condutores. São células que remetem aos sistemas neuronais ou de terminais informáticos, mas poderiam ser celas de uma prisão, como sugerem os títulos de algumas das pinturas recentes aqui expostas”, escreve Antonio Gonçalves Filho, diretor cultural da Almeida & Dale.

Com títulos como Cell (célula ou cela) e Prision (prisão), as pinturas refletem sobre as estruturas da organização social em uma produção artística que se identifica com o pensamento estruturalista e de filósofos franceses como Foucault, Baudrillard e Lyotard. Igualmente, as formas de sua obra parecem responder ao isolamento e individualização do momento atual. Como completa Gonçaves Filho: “o pintor mostra como a sedução da fluorescência e o antinaturalismo das luzes de computadores e celulares acabam por aprisionar o indivíduo contemporâneo”.

Serviço

Exposição | The American Connection

De 11 de abril a 30 de maio

Segunda a sexta-feira, das: 10h às 19h, sábado, das 11h às 16h

Período

Local

Almeida & Dale Fradique

Rua Fradique Coutinho 1360 | 1430, São Paulo - SP

Detalhes

O artista e arquiteto Edo Rocha ganha uma grande exposição retrospectiva na Oca do Ibirapuera, em São Paulo. Com curadoria de Agnaldo Farias, a mostra “Edo Rocha: Arte e Arquitetura”,

Detalhes

O artista e arquiteto Edo Rocha ganha uma grande exposição retrospectiva na Oca do Ibirapuera, em São Paulo. Com curadoria de Agnaldo Farias, a mostra “Edo Rocha: Arte e Arquitetura”, que abre ao público em 6 de maio, reúne mais de 400 trabalhos e apresenta um amplo panorama de mais de 60 anos de trajetória, evidenciando as conexões entre sua produção artística e seus projetos arquitetônicos.

Distribuída pelos quatro andares do edifício projetado por Oscar Niemeyer, a exposição reúne desenhos, pinturas, esculturas, fotografias e instalações, além de maquetes, plantas e diferentes formas de representação de projetos arquitetônicos e urbanísticos, revelando como as investigações visuais do artista se desdobram também em sua prática como arquiteto.

“Essa exposição é um resumo da minha produção. Mostra a interferência entre arte e arquitetura e como, aos poucos, essas duas partes criativas se conectam. Só foi possível reunir um trabalho desse tipo em um espaço como o da Oca, onde é viável mostrar essas várias atividades artísticas e de criação”, afirma Edo Rocha.

A expografia evita hierarquias entre as diferentes linguagens e modos de representação, propondo um percurso que atravessa distintos momentos da carreira do artista — desde os primeiros desenhos, realizados ainda na adolescência, passando pelas investigações com cor e abstração e pela produção gráfica, até chegar a projetos arquitetônicos e instalações recentes. O trajeto culmina em uma obra inédita, de caráter educativo, que reflete sobre o futuro do planeta e as respostas da natureza às ações humanas.

Arte e arquitetura em diálogo

Reconhecido como um dos nomes inventivos da arquitetura brasileira contemporânea, Edo Rocha desenvolveu uma trajetória singular em que prática artística e atividade projetual caminham lado a lado. Ao longo da exposição, torna-se evidente como experimentações visuais presentes em esculturas e desenhos reverberam em edifícios e projetos urbanos, revelando um pensamento criativo que atravessa disciplinas.

Logo no piso térreo, o visitante encontra esculturas em granito, peças metálicas e espelhadas da série Os três mosqueteiros. Projetos arquitetônicos são apresentados em banners acompanhados por vídeos, enquanto um conjunto de maquetes compõe uma espécie de cidade imaginada, reunindo tanto edificações construídas quanto propostas conceituais.

Entre os trabalhos históricos, estão também obras exibidas na X Bienal de São Paulo, em 1969, período em que o artista consolidava sua atuação no campo das artes visuais. Um dos projetos arquitetônicos mais conhecidos de Edo Rocha, o Allianz Parque, também ocupa espaço central na exposição com uma instalação dedicada ao estádio, que dialoga com as obras Onda Verde e Palmeiras.

“Segundo o Paul McCartney, essa é a melhor arena do mundo, e não é o único a dizer. Isso acontece porque Edo é um amante da tecnologia acústica e tem um conhecimento profundo desse campo”, comenta Agnaldo Farias. “É um caso impressionante, fora do esquadro, de alguém que trafega com naturalidade por diversas disciplinas.”

Fotografia, natureza e percepção

No segundo andar, a mostra apresenta três séries fotográficas produzidas neste ano: Japão, Wabi Sabi e O Cosmo. Em Japão, Rocha registra paisagens do país — lagos, jardins e árvores com folhagens vibrantes do outono — destacando espécies simbólicas da cultura japonesa, como a katsura.

Já em Wabi Sabi, o artista explora a estética japonesa que valoriza a imperfeição, a impermanência e a simplicidade. Folhas caídas, fissuras em troncos e marcas do tempo revelam uma beleza associada à passagem natural dos anos.

A série O Cosmo é apresentada tanto em fotografias quanto em uma instalação formada por 80 monitores suspensos, com espelhos na parte traseira, criando um efeito visual que remete a um caleidoscópio.

Música, tecnologia e sustentabilidade

Outra dimensão importante na trajetória de Rocha — sua relação com a música — aparece no térreo baixo da Oca. Ali, um piano de cauda capaz de reproduzir performances de pianistas renomados sincronizadas com projeções em vídeo, integra um espaço concebido como lounge para descanso e contemplação dos visitantes.

Conhecido por integrar design, tecnologia e sustentabilidade em seus projetos, o artista também incorporou à exposição painéis acústicos produzidos com Ecoshapes, placas feitas de PET reciclado instaladas ao longo do percurso expositivo.

A preocupação ambiental, presente na obra de Rocha desde o início de sua carreira, aparece ainda em uma instalação audiovisual que aborda temas como crise hídrica, aumento das emissões de dióxido de carbono e intensificação de catástrofes naturais.

A exposição conta com o apoio da Lei Federal de Incentivo à Cultura (Lei Rouanet).

Edo Rocha: Natural de São Paulo, Edo Rocha nasceu em 1949. Ainda jovem iniciou sua formação artística, realizando aos 16 anos sua primeira exposição individual na galeria Ars Mobile.

Durante a adolescência viveu em Salvador (BA), onde estudou pintura no Instituto Cultural Brasil Alemanha e participou de exposições coletivas ligadas ao curso experimental de pintura. De volta a São Paulo, integrou a IX Bienal de São Paulo, em 1967, e retornou à mostra dois anos depois, na X Bienal, em 1969.

Ao longo da década de 1970, recebeu duas vezes o Prêmio de Aquisição Jovem Arte Contemporânea, concedido pelo Museu de Arte Contemporânea da USP. Desde então, construiu uma trajetória que transita entre artes visuais, arquitetura e urbanismo, consolidando-se como uma figura singular no panorama cultural brasileiro.

Serviço

Exposição | Edo Rocha: Arte e Arquitetura

De 06 de maio a 20 de julho

Terça a domingo, das 10h às 19h (entrada até 18h)

Período

Local

OCA

Pavilhão Lucas Nogueira Garcez, Parque Ibirapuera – São Paulo, SP

Detalhes

Um amplo mapeamento da produção visual brasileira contemporânea, que articula diferentes linguagens e traz cerca de 130 artistas de todas as regiões do país, ganha forma na exposição Delírio Tropical

Detalhes

Um amplo mapeamento da produção visual brasileira contemporânea, que articula diferentes linguagens e traz cerca de 130 artistas de todas as regiões do país, ganha forma na exposição Delírio Tropical – Recanto no Sesc Pinheiros, com curadoria de Orlando Maneschy e curadoria adjunta de Keyla Sobral. Com cerca de 280 obras, a mostra propõe uma imersão no imaginário de um Brasil plural, múltiplo e em constante reinvenção, compondo um mosaico que vai de imagens históricas a produções contemporâneas e que se voltam às experiências singulares em territórios distintos e entendimentos de mundo.

Delírio Tropical – Recanto constrói uma narrativa que articula diferentes manifestações visuais artísticas — da fotografia ao vídeo, passando por cartazes, lambes, revistas, esculturas, pinturas e objetos. A mostra propõe uma reflexão sobre o papel das imagens na construção do entendimento do país e de suas múltiplas identidades, a partir de uma curadoria que reúne artistas comprometidos com suas experiências, territórios e modos de existência, incluindo perspectivas culturais das várias regiões do país, ao lado de uma diversidade de vozes contemporâneas.

O conjunto expositivo inclui obras de artistas como Anna Maria Maiolino, Ayrson Heráclito, Cildo Meireles, Claudia Andujar, Dalton Paula, Denilson Baniwa, Hal Wildson, Índigo Braga, Rosângela Rennó e Karim Aïnouz.

“Buscamos reunir artistas que olham para o Brasil a partir de seus territórios, que usam a imagem como elemento decisivo na construção de discursos, ressaltando uma ideia de país diverso”, afirma o curador Orlando Maneschy. “A exposição é um convite a romper com olhares estereotipados e a se abrir para experiências que nos deslocam e nos transformam”, completa Maneschy.

Originalmente apresentada na Pinacoteca do Ceará como parte do Fotofestival Solar, a exposição chega ao Sesc Pinheiros como itinerância, ampliando o acesso a um conjunto de obras que tensionam estereótipos e revelam um Brasil marcado por disputas simbólicas, afetos, violências, resistências e desejos. Na capital paulista, a mostra conta com uma obra inédita de Keyla Sobral e expografia pensada para as especificidades do Sesc Pinheiros.

Um caminho visual por múltiplos Brasis

A mostra parte da ideia de que a imagem, em suas múltiplas formas de circulação — do das capas de discos ao GIF, da fotografia ao impresso que atravessa o cotidiano — é um elemento central na construção do imaginário brasileiro. Com artistas de diferentes contextos, a seleção de obras apresenta um país em construção e diverso, atravessado por tensões entre memória e atualidade, sem buscar um discurso fechado, mas aberto à reflexão. Nesse percurso, imagens históricas e contemporâneas se aproximam para retomar questões que seguem latentes, sugerindo continuidades entre passado e presente.

Mais do que um recorte autoral, Delírio Tropical – Recanto sugere um campo de escuta em que os trabalhos dialogam entre si e constroem, coletivamente, uma leitura possível do Brasil, entre tantas outras plausíveis, que não quer ser totalizante e, inclusive, assume ausências e lacunas. A curadoria se organiza como uma articulação desses olhares, e o resultado é um caminho por um Brasil em que diferentes corpos, identidades e narrativas emergem não como representação, mas como presença, ativando outras formas de ver, imaginar e se relacionar com o país.

A expografia conduz o visitante por um fluxo imersivo que começa em um ambiente de caráter quase ritualístico, evocando a ruptura com visões cristalizadas, e avança por núcleos que abordam temas como identidade, memória, mídia, território e poder. Ao longo do trajeto, obras de distintas épocas e contextos entram em diálogo, criando conexões inesperadas e ativando novas leituras sobre o Brasil.

A relação entre imagem e circulação também está em destaque na montagem, incorporando elementos da cultura visual cotidiana, como capas de revistas, de discos e registros documentais, que ajudam a construir o imaginário coletivo brasileiro. Nesse sentido, Delírio Tropical – Recanto propõe não apenas observar imagens, mas refletir sobre como elas moldam percepções, narrativas e sentidos.

A realização da mostra integra o compromisso institucional do Sesc São Paulo de ampliar o acesso à produção artística contemporânea e promover o encontro entre diferentes públicos e repertórios culturais. É um convite ao público para reimaginar o Brasil a partir de sua diversidade, de seus contrastes e de sua riqueza simbólica. A programação inclui ainda ações educativas, visitas mediadas e atividades formativas, ampliando as possibilidades de diálogo com os públicos.

Serviço

Exposição | Delírio Tropical – Recanto

De 6 de maio a 13 de outubro

Terça a sábado, das 10h30 às 21h, domingos e feriados, das 10h30 às 18h

Período

Local

Sesc Pinheiros

Rua Paes Leme, 195, Pinheiros, São Paulo - SP

Detalhes

Em colaboração com Cristina Tolovi e Luana Fortes, o Anexo Galeria Marília Razuk apresenta a mostra coletiva Quarto. Dando continuidade a uma investigação curatorial que articula espaços domésticos e

Detalhes

Em colaboração com Cristina Tolovi e Luana Fortes, o Anexo Galeria Marília Razuk apresenta a mostra coletiva Quarto. Dando continuidade a uma investigação curatorial que articula espaços domésticos e suas dimensões simbólicas e afetivas.

Partindo da ideia do cômodo como um ambiente íntimo e privado, a exposição propõe uma aproximação sensível com esse território onde o indivíduo se encontra consigo mesmo e, ocasionalmente, com o outro. As obras reunidas refletem sobre estados de solidão, relações de companhia amorosa e/ou sexual, além de explorar o universo onírico, os devaneios e as construções subjetivas que emergem nesse espaço.

Quarto desdobra questões já abordadas na coletiva Sala de Jantar, realizada em maio de 2025, que teve como ponto de partida o emblemático trabalho Hôtel du Pavot, Chambre 202, de Dorothea Tanning, estabelecendo conexões com o surrealismo e suas investigações sobre o inconsciente e o espaço doméstico.

A exposição será realizada no Anexo Galeria Marilia Razuk, número 62 da Rua Jerônimo da Veiga, um espaço destinado a projetos especiais cuja arquitetura foi originalmente concebida por Lina Bo Bardi e posteriormente adaptada por Isay Weinfeld. O local reforça o diálogo entre arquitetura e proposta curatorial, ampliando a experiência do visitante.

Reunindo os artistas Ana Dias Batista, Ana Sant’Anna, A Transälien, Bruno Faria, Carolina Colichio, Débora Bolzsoni, Estúdio Rain, João Loureiro, Karola Braga, Lyz Parayzo, Maria Tereza Bomfim, Marcus Deusdedit, Mónica Heller, Patricia Faragone, Regina Vater, Rodrigo Bueno, Rose Afefé, Seba Calfuqueo, Thiago Honório, Quarto propõe um percurso imersivo por diferentes abordagens contemporâneas que investigam a intimidade, o corpo, o desejo e os espaços de recolhimento.

A coletiva convida o público a atravessar um território sensível onde o privado se torna compartilhável, e onde o quarto, mais do que um espaço físico, se revela como um campo de projeção subjetiva, memória e imaginação.

Artistas que integram a mostra:

Ana Dias Batista, A Transälien, Carolina Colichio, Débora Bolzsoni, Estúdio Rain, Karola Braga, Lyz Parayso, Maria Tereza Bomfim, Marcus Deusdedit, Monica Heller, Patrica Faragone, Regina Vater, Rose Afefé, Seba Calfuqueo e Thiago Honório.

Serviço

Exposição | Quarto

De 09 de Maio a 06 de Junho

Segunda a sexta, das 10h30 às 19h; sábado, das 11h às 16h

Período

Local

Anexo Galeria Marília Razuk

Rua Jerônimo da Veiga, 131 – Itaim Bibi, São Paulo - SP

Detalhes

A Galeria Marilia Razuk apresenta a exposição corpo assentado, cabeça voando, com curadoria de Camila Bechelany e Leo Felipe. A mostra reúne um conjunto de obras do acervo da galeria

Detalhes

A Galeria Marilia Razuk apresenta a exposição corpo assentado, cabeça voando, com curadoria de Camila Bechelany e Leo Felipe. A mostra reúne um conjunto de obras do acervo da galeria que têm no desenho não apenas uma técnica, mas uma prática expandida, um campo de pensamento, gesto e inscrição no mundo.

A linguagem surge como um impulso espontâneo, quase coreográfico, próximo à dança. Embora muitas vezes percebido como uma habilidade inata, ele se constrói no tempo, no exercício contínuo de situar o corpo e experimentar o espaço. Tem a ver com querer se inscrever no mundo, assentar-se, situar-se. Ao mesmo tempo, o desenho é uma prática para se perder, para deixar-se levar com ou sem rumo, alternando o ritmo sobre as superfícies à volta.

A exposição propõe pensar a linha em sua natureza ambígua, entre imagem e escrita, entre registro e invenção. Trata-se de uma linguagem elementar e, ao mesmo tempo, ilimitada em suas possibilidades.

corpo assentado, cabeça voando convida o público a percorrer essas diferentes formas de desenhar, entendendo o traço não como ponto de partida ou etapa preparatória, mas como expressão autônoma, capaz de sustentar, por si só, a complexidade do fazer artístico.

Reunindo artistas de diferentes gerações e abordagens, a mostra evidencia o desenho como um território de experimentação contínua, no qual pensamento e matéria se encontram.

Participam da exposição: Amilcar de Castro, Ana Dias Batista, Ana Matheus Abbade, André Dahmer, Cabelo, Claudio Cretti, Débora, Bolzsoni, Flora Rebollo e Flavushh, Jaider Esbell, João Loureiro, Johanna Calle, José Leonilson, Luiza Sigulem, Manuel Brandazza, Maria Laet, Mariana Serri, Marilá Dardot, Paulo Nazareth, Renata Haar, Thiago Barbalho e Waldomiro Mugrelise.

Serviço

Exposição | corpo assentado, cabeça voando

De 09 de maio a 06 de junho

Segunda a sexta, das 10h30 às 19h; sábado, das 11h às 16h

Período

Local

Anexo Galeria Marília Razuk

Rua Jerônimo da Veiga, 131 – Itaim Bibi, São Paulo - SP

Detalhes

A exposição Com.Fiar reúne as artistas Cyra Moreira e Cecilia Tilkian em um trabalho desenvolvido a partir de um processo colaborativo. Foram 480 dias, 58 encontros de confiança e compartilhamento para

Detalhes

A exposição Com.Fiar reúne as artistas Cyra Moreira e Cecilia Tilkian em um trabalho desenvolvido a partir de um processo colaborativo.

Foram 480 dias, 58 encontros de confiança e compartilhamento para chegar em 125 metros contínuos, fiando tramas com sensibilidade, trocando e somando, porque Com.Fiar pressupõe o outro, lado a lado, como deve ser na vida.

Resultado desse percurso, a obra propõe uma reflexão sobre criação, compartilhamento e construção conjunta no campo das artes visuais.

Serviço

Exposição | Com.Fiar

De 10 de maio a 07 de junho

Quarta a domingo, das 10h às 17h

Período

Local

Galeria Marta Traba | Memorial da América Latina

Av. Mário de Andrade, 664 Barra Funda, São Paulo, SP

Detalhes

A Pinakotheke inaugura sua nova sede em Higienópolis, em São Paulo, com a exposição “Surrealismos: arte para além da razão”. Com curadoria de Max Perlingeiro e Tadeu Chiarelli,

Detalhes

A Pinakotheke inaugura sua nova sede em Higienópolis, em São Paulo, com a exposição “Surrealismos: arte para além da razão”. Com curadoria de Max Perlingeiro e Tadeu Chiarelli, a mostra reúne cerca de 100 obras de 60 artistas de diferentes partes do mundo, propondo um amplo panorama do Surrealismo e de seus desdobramentos na arte moderna e contemporânea. A entrada é gratuita.

Instalada em uma ampla casa na Rua Minas Gerais, esquina com a Praça Marechal Cordeiro de Farias, a nova sede da Pinakotheke conta com 700 m² de terreno, sendo 180 m² dedicados às áreas expositivas e 370 m² de espaço externo. O novo endereço marca uma importante reorganização institucional da Pinakotheke, fundada no Rio de Janeiro em 1979 por Max Perlingeiro, além de consolidar uma nova identidade visual e um projeto de expansão nacional e internacional.

A exposição é organizada em núcleos dedicados a artistas europeus, latino-americanos, norte-americanos e caribenhos, reunindo nomes históricos como Salvador Dalí, René Magritte, Pablo Picasso, Giorgio de Chirico, Joan Miró, Louise Bourgeois, Marcel Duchamp, Leonora Carrington, Roberto Matta e Diego Rivera. Entre os brasileiros, destacam-se obras de Tarsila do Amaral, Maria Martins, Flávio de Carvalho, Cícero Dias, Farnese de Andrade, Tunga e Erika Verzutti.

Além das pinturas, esculturas, gravuras e fotografias, a mostra contará com salas especiais dedicadas a Maria Martins e Louise Bourgeois, além da exibição de vídeos de artistas contemporâneas como Letícia Parente, Lenora de Barros, Kátia Maciel e Lia Chaia. O percurso inclui ainda trechos de filmes clássicos ligados ao imaginário surrealista, como “Un chien andalou”, de Luis Buñuel e Salvador Dalí, e “Le Sang d’un poète”, de Jean Cocteau.

Ao longo da exposição, será lançado o livro bilíngue “Surrealismos: arte para além da razão”, publicado pela Pinakotheke Editora, com mais de 300 páginas e textos de pesquisadores e críticos convidados. A Pinakotheke São Paulo fica na Rua Minas Gerais, 246, em Higienópolis, com funcionamento de segunda a sexta-feira, das 10h às 18h, e aos sábados e feriados, das 10h às 16h.

Serviço

Exposição | Surrealismos: arte para além da razão

De 18 de maio a 15 de agosto

Segunda a sexta-feira, das 10h às 18h, e aos sábados e feriados, das 10h às 16h

Período

Local

Pinakotheke São Paulo Higienópolis

Rua Minas Gerais, 246, Higienópolis, São Paulo - SP

Detalhes

Beatriz Milhazes, grande nome da arte brasileira, é conhecida por seu trabalho que alia rigor geométrico a uma atmosfera sempre festiva, fruto de sua paleta exuberante. Arte têxtil, chita, bordado,

Detalhes

Beatriz Milhazes, grande nome da arte brasileira, é conhecida por seu trabalho que alia rigor geométrico a uma atmosfera sempre festiva, fruto de sua paleta exuberante. Arte têxtil, chita, bordado, tecelagem tipicamente brasileira e grafismos indígenas são referências das quais ela se alimenta, transformando tudo isso em uma linguagem própria.

“Gravuras do acervo da Pinacoteca de São Paulo” reúne pela primeira vez um conjunto de 27 gravuras produzidas entre 1996 e 2019, resultado da colaboração de Beatriz com Jean-Paul Rusell, fundador da Durham Press — estúdio de edição de gravuras, livros de artista e obras únicas sediado na Pensilvânia, Estados Unidos.

A Pinacoteca é o único museu do mundo que possui esse conjunto de trabalhos.

Complexidade e beleza

Visitantes poderão apreciar obras de múltiplas cores, estampas florais formando portais, guirlandas e ramos frondosos; as gravuras de Beatriz desenvolvidas ao lado de Jean-Paul Rusell.

A produção da artista é marcada por uma linguagem de complexidade e beleza, e pela coerência no modo como consegue transitar entre diferentes técnicas, partindo sempre da pintura até chegar nas gravuras.

Em suas obras aparecem também formas sinuosas, discos, mandalas e colares de contas, incrementando a tradição geométrica brasileira. Na exposição é possível também perceber o modo como Milhazes monta e remonta as formas, as cores e os espaços aparentemente vazios, como os que aparecem em O pato (1996) e Noite de verão (2006).

Serviço

Exposição | Gravuras do acervo da Pinacoteca de São Paulo

De 16 de maio a 14 de março de 2027

Quarta a segunda, das 10h às 18h (entrada até 17h), sábados e segundos domingos do mês gratuitos

Período

Local

Pina Estação

Lg. General Osório, 66, São Paulo - SP

Detalhes

O MASP — Museu de Arte de São Paulo Assis Chateaubriand exibe Claudia Alarcón & Silät: viver tecendo. A mostra reúne 25 trabalhos que contemplam a produção artística de Claudia

Detalhes

O MASP — Museu de Arte de São Paulo Assis Chateaubriand exibe Claudia Alarcón & Silät: viver tecendo. A mostra reúne 25 trabalhos que contemplam a produção artística de Claudia Alarcón (La Puntana, Argentina, 1989) & Silät, coletivo formado por mais de cem tecedeiras do povo Wichí. Com curadoria de Adriano Pedrosa, diretor artístico, MASP, e Laura Cosendey, curadora assistente, MASP, a exposição marca a estreia da artista e do grupo em um museu brasileiro.

As obras são produzidas com fios de chaguar, uma bromélia de fibras resilientes nativa do clima semiárido do Gran Chaco, maior bioma da América Latina depois da Amazônia, que ocupa as regiões norte e nordeste da Argentina, chegando até o Paraguai. A preparação do chaguar e a técnica de entrelaçar os fios com as mãos, sem o uso de um tear, provêm da confecção das bolsas yicas, objeto central para a cultura wichí. Tradicionalmente, a yica tem formato quadrado, com padrões geométricos que representam a flora e a fauna de seu território, remetendo a temas como orelhas de tatu, olhos de coruja e cascos de tartaruga. Embora seja o ponto de partida do trabalho de Alarcón & Silät, suas obras transcendem esse repertório tradicional. A partir de oficinas que propunham pensar novos formatos para as bolsas yicas, o coletivo Silät se organizou em 2023, passando a produzir tecidos dentro do contexto artístico.

Historicamente, os têxteis produzidos pelos Wichí tinham tons terrosos, avermelhados e azuis acinzentados, mas as artistas passaram a adicionar cores mais intensas com anilinas no processo de preparação dos fios, chegando a matizes exuberantes de tons laranja e fúcsia, por exemplo. Outra importante inovação do trabalho de Alarcón & Silät está no próprio processo de produção dos tecidos: enquanto tradicionalmente as mulheres sempre teceram individualmente, as integrantes do Silät desenvolveram métodos para que várias integrantes pudessem trabalhar simultaneamente em uma mesma peça ou dar continuidade ao trabalho de outra tecedeira.

A mitologia do povo Wichí também compõe os trabalhos de Alarcón & Silät. Em Kates tsinhay — Mujeres estrellas [Mulheres-estrelas], 2023, Claudia Alarcón evoca o mito das mulheres-estrelas. A crença narra que as mulheres eram estrelas no céu e desciam à Terra todas as noites por fios de chaguar que elas mesmas haviam tecido. Vinham se alimentar, roubando os peixes que os homens pescavam. Quando os homens descobriram, cortaram esses fios e as mulheres ficaram na Terra. Essa obra e outras inspiradas por esse enredo simbólico mesclam as geometrias ancestrais com elementos figurativos para delinear estrelas, luas, astros e céus estrelados.

“Recupero lendas e histórias do nosso povo, sinto que tem muito trabalho a ser revivido. Penso em como recuperar isso, porque é algo que talvez não possa ser dito oralmente, não podemos gritar isso. Mas o tecido também fala. Há quem possa entender ou sentir isso no tecido. Eu me dei conta de que, embora teçamos em silêncio, tudo está dito no tecido”, comenta Alarcón.

Os wichís chamam seu território de tayhi e o consideram parte fundamental da identidade, tendo uma dimensão espiritual e simbólica. Em espanhol, o nome para a região é monte. Porém, ainda que o nome remeta a montanhas, o relevo local é majoritariamente plano. A experiência cotidiana, o vento, o dia, o entardecer, a noite, as constelações e muitos outros elementos da vivência no monte estão presentes nas cores, formas orgânicas e geométricas dos trabalhos de Alarcón & Silät. O olhar sensível das tecedeiras para os ciclos naturais retrata na abstração Kyelhkyup — El otoño [Outono], 2023, da coleção do MASP, as mudanças de tons, texturas e luz durante a passagem das estações no monte.

Tecer em conjunto, somado às inovações implementadas, possibilitou a elaboração de composições têxteis que trazem uma multiplicidade de vozes e cores, articulando padrões tradicionais com um repertório visual e poético contemporâneo. “Os tecidos tornaram-se bandeiras de luta, estandartes que portam mensagens, histórias, e dão vozes às mulheres da comunidade”, afirma Laura Cosendey.

Tanto a singularidade das artistas quanto a dimensão do coletivo são demonstradas na instalação Hilulis ta llhaiematwek — Un coro de yicas [Um coro de yicas] (2024-25), que reúne mais de cem bolsas, cada uma delas produzida por uma integrante do grupo. As escolhas pessoais de cor e padrão são destacadas quando os trabalhos são exibidos lado a lado, enquanto a apresentação em conjunto reforça o caráter político da articulação do coletivo, que possibilitou criticar questões como a desvalorização do saber ancestral e a precarização do trabalho das tecedeiras.

Na exposição, as obras são apresentadas em molduras ou em estruturas verticais de madeira, que remetem à maneira como esses tecidos são produzidos e, ocasionalmente, apresentados na comunidade onde vivem as tecedeiras. O conjunto N’äyhay wet layikis — Caminos y cicatrizes [Caminhos e cicatrizes] é um dos trabalhos exibidos nesse suporte expográfico proposto pelo MASP. A composição têxtil foi pensada pelo coletivo, em 2025, para o Nove de Julho, dia em que se comemora a independência da Argentina. A criação artística foi tecida pelas mulheres para denunciar a repressão violenta cometida ao longo do tempo pelo Estado argentino contra populações indígenas.

Claudia Alarcón & Silät: viver tecendo integra a programação anual do MASP dedicada às Histórias latino-americanas. A agenda do ano também inclui mostras de La Chola Poblete, Sandra Gamarra Heshiki, Santiago Yahuarcani, Colectivo Acciones de Arte, Damián Ortega, Sol Calero, Carolina Caycedo, Pablo Delano, Rosa Elena Curruchich, Manuel Herreros e Mateo Manaure, Jesús Soto e uma exposição coletiva internacional.

Serviço

Exposição | Claudia Alarcón & Silät: viver tecendo

De 06 de março a 02 de agosto

Terças grátis, das 10h às 20h (entrada até as 19h); quarta e quinta das 10h às 18h (entrada até as 17h); sexta das 10h às 21h (entrada gratuita das 18h às 20h30); sábado e domingo, das 10h às 18h (entrada até as 17h); fechado às segundas.

Agendamento on-line obrigatório pelo link masp.org.br/ingressos

Período

Local

MASP

Avenida Paulista, 1578, São Paulo

Detalhes

A DAN Galeria inaugura a exposição Máscaras, Ivald Granato – Quem é você?, com curadoria de Maria Alice Milliet. A mostra inédita reúne um conjunto de pinturas realizadas por Granato

Detalhes

A DAN Galeria inaugura a exposição Máscaras, Ivald Granato – Quem é você?, com curadoria de Maria Alice Milliet. A mostra inédita reúne um conjunto de pinturas realizadas por Granato no fim da década de 1990 e as coloca em diálogo com máscaras africanas preservadas nas coleções de Christian-Jack Heymès e da família Mastrobuono. A abertura acontece em São Paulo e se conecta à agenda da 22º edição da SP-Arte.

A exposição parte de um dado central para a leitura da trajetória de Ivald Granato. Durante décadas, sua presença pública, suas ações performáticas e sua energia irreverente ocuparam lugar decisivo na recepção de sua obra. Esse aspecto é incontornável, mas não a resume.

Granato foi também um pintor de grande domínio técnico, um desenhista excepcional e um conhecedor profundo da história da arte. Transitava entre linguagens e repertórios com intimidade rara, não para repetir estilos, mas para tensioná-los a partir de uma inteligência visual muito própria. Maria Alice Milliet lembra que, ao chegar à maturidade, depois de mais de três décadas de exposições, premiações e reconhecimento, Granato já ocupava um lugar de destaque na cena artística brasileira. Talentoso desenhista e pintor, havia atravessado os “ismos” e a Pop Art em estreita sintonia com seu tempo.

Essa mostra ajuda a recolocar esse ponto em evidência, situando-o como parte de uma investigação consistente, em que pintura, memória, teatralidade e identidade se entrelaçam. No fim dos anos 1990, Granato se afasta, por um momento, do embate mais imediato com a contemporaneidade e volta o olhar para dimensões profundas de sua própria formação. É desse movimento que nasce a série ligada às máscaras. Segundo a curadora, essa passagem corresponde a uma inflexão em sua carreira, quando o artista procura valores ligados ao passado, à ancestralidade e à memória cultural brasileira. Em 1998, Granato realiza uma série de pinturas sobre papel chamada The Mask. Na sequência, desenvolve obras de maior fôlego reunidas sob o título Quem é você – The Mask. Para o artista, essas máscaras eram anotações visuais de rostos que povoavam seu imaginário.

Ao tratar dessa produção, Maria Alice Milliet desloca a leitura habitual que costuma aproximar esse tipo de repertório apenas da tradição europeia do moderno. No caso de Granato, ela está ligada à busca de raízes culturais e ao desejo de afirmação identitária. A curadora recupera sua origem miscigenada, com ascendência negra e indígena, e inscreve essa série num campo de pertencimento, reconhecimento simbólico e reverência, marcado por uma aproximação que nasce de dentro. Esse aspecto é decisivo para a compreensão da mostra. A ancestralidade africana aparece como força estrutural na cultura brasileira e como chave para reler uma parte importante de sua obra.

Milliet observa que, depois de uma primeira incursão em figuras mais próximas de um universo popular e carnavalesco, Granato volta-se para as máscaras tribais. Na série cuja pergunta organiza o título da exposição, vemos uma sucessão de caras estranhas emergirem de fundos escuros, em composições que condensam intensidade gráfica, energia cromática e forte carga simbólica. A representação tem, nesse conjunto, um peso particular. Ela é figura de passagem, condensação de gesto, invenção de persona e presença ritual. Dez anos após sua morte, Máscaras, Ivald Granato – Quem é você? nos faz compreender com mais nitidez a complexidade do artista.

Serviço

Exposição | Máscaras, Ivald Granato – Quem é você?

De 28 de março a 25 de junho

Segunda-feira, terça-feira, quarta-feira, quinta-feira, sexta-feira das 10:00h às 19:00h, sábados das 10h às 13h

Período

Local

DAN Galeria

Rua Estados Unidos, 1638 01427-002 São Paulo - SP

Detalhes

A Casa de Cultura do Parque apresenta a exposição “Badauê”, de Andrea Brazil (Gabinete), como parte de seu I Ciclo Expositivo. Com curadoria de Claudio Cretti, diretor artístico da Casa,

Detalhes

A Casa de Cultura do Parque apresenta a exposição “Badauê”, de Andrea Brazil (Gabinete), como parte de seu I Ciclo Expositivo. Com curadoria de Claudio Cretti, diretor artístico da Casa, e texto de Ana Avelar, a mostra reúne trabalhos marcados pela geometrização e a reconfiguração visual da arquitetura vernacular.

A trajetória de Andrea Brazil (São Paulo, 1972) entre Salvador e a Ilha de Itaparica, no Recôncavo Baiano, fundamenta sua visualidade funcional. A artista cresceu em contato com uma arquitetura litorânea marcada por intervenções anônimas – feitas com cacos de telha e sobras de material – que configuram o que ela chama de “desenho no espaço”.

Nesse processo, fachadas de casas e estabelecimentos, grades e outros elementos urbanos, se reorganizam como linhas, cores e vazios. “Trata-se de uma produção coletiva e vernacular que condensa história, clima, técnica e desejo estético em um mesmo gesto construtivo”, afirma Avelar.

O olhar de Brazil para a arquitetura vernacular de sua infância se aprofundou durante uma viagem à região do Algarve, em Portugal. Lá, a influência moura, reconhecível nos cantos arredondados e padrões geométricos, ressoou com o que a artista conhecia da Bahia.

Para Avelar, a conexão não é casual: Brazil observa que os negros malês, protagonistas da revolta de 1835 em Salvador, eram em sua maioria de origem muçulmana e portadores de uma tradição visual que se infiltrou na cultura material da cidade. O ornamento, nesse sentido, guarda estratos históricos que a superfície das fachadas não revela explicitamente.

Uma das séries apresentadas é construída sobre chapas de madeira com camadas de massa corrida sobrepostas em duas etapas. O desenho das grades — fruto de uma memória visual internalizada — é entalhado na superfície até revelar a cor subjacente. Quando o trabalho aposta na cor, destaca-se pela vibração óptica, transitando entre a estridência da Pop Art e o silêncio das superfícies opacas.

Além da individual de Brazil, o I Ciclo Expositivo inclui a mostra coletiva “O horror, o humor e o absurdo” (Galeria do Parque) e “Calendário”, de Felipe Rezende (Projeto 280X1020). O ciclo transita no limiar entre o real e o imaginário e articula a fabulação como instrumento indispensável para subverter as atuais configurações de mundo. Claudio Cretti afirma que este ciclo busca, através de diferentes linguagens, “tensionar os limites entre o concebível e o inconcebível, ressaltando o potencial da ficção para se pensar criticamente a realidade”.

Por fim, o programa de Performances será aberto no dia 28 de março de 2026, às 17h, pela performer e dançarina Maria Noujaim, com “Lago”. Através da transposição da mitologia em movimento, a artista explora os hibridismos entre animal e humano, tomando como ponto de partida o mito grego de Leda e o Cisne.

O I Ciclo Expositivo tem curadoria de Claudio Cretti e é uma idealização do Instituto de Cultura Contemporânea (ICCo) e foi realizado com recursos da Lei Rouanet, Ministério da Cultura, com patrocínio do banco BV, Laranjinha e Banco Itaú.

Serviço

Exposição | Badauê

De 28 de março a 28 de junho

Quarta a domingo, das 11h às 18h

Período

Local

Casa de Cultura do Parque

Av. Prof. Fonseca Rodrigues, 1300 - Alto de Pinheiros, São Paulo - SP, 05461-010

Detalhes

Com curadoria de Ayrson Heráclito e Rodrigo Moura, a exposição Mestre Didi – invenção e ancestralidade na arte afro-brasileira, no Itaú Cultural, engloba todo o percurso do sacerdote-artista, evidenciando sua importância tanto

Detalhes

Com curadoria de Ayrson Heráclito e Rodrigo Moura, a exposição Mestre Didi – invenção e ancestralidade na arte afro-brasileira, no Itaú Cultural, engloba todo o percurso do sacerdote-artista, evidenciando sua importância tanto para o alargamento dos horizontes do mundo das artes quanto para a evolução da luta contra o racismo.

Em sua trajetória, o baiano Mestre Didi (1917-2013) percorreu e distendeu os domínios das artes e da religiosidade. As muitas habilidades artísticas e artesanais de Didi lhe conferiram o lugar de mestre, assim como sua vocação e sua atuação religiosa o colocaram em cargos de destaque nos cultos. E é esse itinerário que ganha o Itaú Cultural (IC) no período de 8 de abril a 5 de julho de 2026.

Além das criações de Didi no campo das artes visuais, a individual aborda sua produção textual/literária e sua participação no desenvolvimento de relevantes organizações e eventos, no Brasil e em outros países, voltados para a pesquisa e a promoção das culturas africanas e afrodiaspóricas. O espaço expositivo também explora as conexões entre Didi e outros criadores, como o seu contemporâneo Abdias Nascimento – tema de mostra do projeto Ocupação Itaú Cultural em 2016.

No dia 7 de abril, às 19h, acontece a abertura de Mestre Didi – invenção e ancestralidade na arte afro-brasileira. Para celebrar esse momento, o terreiro Ilê Asipá apresenta Oro Ojés, cerimônia tradicional realizada em todas as festividades do espaço fundado pelo próprio Didi, em Salvador.

Serviço

Exposição | Mestre Didi – invenção e ancestralidade na arte afro-brasileira

De 8 de abril a 5 de julho

Terça a sábado, das 11h às 20h, domingos e feriados, das 11h às 19h

Pisos 1, -1 e -2

Período

Local

Itaú Cultural

Avenida Paulista, 149, Sâo Paulo - SP

Detalhes

O artista e arquiteto Edo Rocha ganha uma grande exposição retrospectiva na Oca do Ibirapuera, em São Paulo. Com curadoria de Agnaldo Farias, a mostra “Edo Rocha: Arte e Arquitetura”,

Detalhes

O artista e arquiteto Edo Rocha ganha uma grande exposição retrospectiva na Oca do Ibirapuera, em São Paulo. Com curadoria de Agnaldo Farias, a mostra “Edo Rocha: Arte e Arquitetura”, que abre ao público em 6 de maio, reúne mais de 400 trabalhos e apresenta um amplo panorama de mais de 60 anos de trajetória, evidenciando as conexões entre sua produção artística e seus projetos arquitetônicos.

Distribuída pelos quatro andares do edifício projetado por Oscar Niemeyer, a exposição reúne desenhos, pinturas, esculturas, fotografias e instalações, além de maquetes, plantas e diferentes formas de representação de projetos arquitetônicos e urbanísticos, revelando como as investigações visuais do artista se desdobram também em sua prática como arquiteto.

“Essa exposição é um resumo da minha produção. Mostra a interferência entre arte e arquitetura e como, aos poucos, essas duas partes criativas se conectam. Só foi possível reunir um trabalho desse tipo em um espaço como o da Oca, onde é viável mostrar essas várias atividades artísticas e de criação”, afirma Edo Rocha.

A expografia evita hierarquias entre as diferentes linguagens e modos de representação, propondo um percurso que atravessa distintos momentos da carreira do artista — desde os primeiros desenhos, realizados ainda na adolescência, passando pelas investigações com cor e abstração e pela produção gráfica, até chegar a projetos arquitetônicos e instalações recentes. O trajeto culmina em uma obra inédita, de caráter educativo, que reflete sobre o futuro do planeta e as respostas da natureza às ações humanas.

Arte e arquitetura em diálogo

Reconhecido como um dos nomes inventivos da arquitetura brasileira contemporânea, Edo Rocha desenvolveu uma trajetória singular em que prática artística e atividade projetual caminham lado a lado. Ao longo da exposição, torna-se evidente como experimentações visuais presentes em esculturas e desenhos reverberam em edifícios e projetos urbanos, revelando um pensamento criativo que atravessa disciplinas.

Logo no piso térreo, o visitante encontra esculturas em granito, peças metálicas e espelhadas da série Os três mosqueteiros. Projetos arquitetônicos são apresentados em banners acompanhados por vídeos, enquanto um conjunto de maquetes compõe uma espécie de cidade imaginada, reunindo tanto edificações construídas quanto propostas conceituais.

Entre os trabalhos históricos, estão também obras exibidas na X Bienal de São Paulo, em 1969, período em que o artista consolidava sua atuação no campo das artes visuais. Um dos projetos arquitetônicos mais conhecidos de Edo Rocha, o Allianz Parque, também ocupa espaço central na exposição com uma instalação dedicada ao estádio, que dialoga com as obras Onda Verde e Palmeiras.

“Segundo o Paul McCartney, essa é a melhor arena do mundo, e não é o único a dizer. Isso acontece porque Edo é um amante da tecnologia acústica e tem um conhecimento profundo desse campo”, comenta Agnaldo Farias. “É um caso impressionante, fora do esquadro, de alguém que trafega com naturalidade por diversas disciplinas.”

Fotografia, natureza e percepção

No segundo andar, a mostra apresenta três séries fotográficas produzidas neste ano: Japão, Wabi Sabi e O Cosmo. Em Japão, Rocha registra paisagens do país — lagos, jardins e árvores com folhagens vibrantes do outono — destacando espécies simbólicas da cultura japonesa, como a katsura.

Já em Wabi Sabi, o artista explora a estética japonesa que valoriza a imperfeição, a impermanência e a simplicidade. Folhas caídas, fissuras em troncos e marcas do tempo revelam uma beleza associada à passagem natural dos anos.

A série O Cosmo é apresentada tanto em fotografias quanto em uma instalação formada por 80 monitores suspensos, com espelhos na parte traseira, criando um efeito visual que remete a um caleidoscópio.

Música, tecnologia e sustentabilidade

Outra dimensão importante na trajetória de Rocha — sua relação com a música — aparece no térreo baixo da Oca. Ali, um piano de cauda capaz de reproduzir performances de pianistas renomados sincronizadas com projeções em vídeo, integra um espaço concebido como lounge para descanso e contemplação dos visitantes.

Conhecido por integrar design, tecnologia e sustentabilidade em seus projetos, o artista também incorporou à exposição painéis acústicos produzidos com Ecoshapes, placas feitas de PET reciclado instaladas ao longo do percurso expositivo.

A preocupação ambiental, presente na obra de Rocha desde o início de sua carreira, aparece ainda em uma instalação audiovisual que aborda temas como crise hídrica, aumento das emissões de dióxido de carbono e intensificação de catástrofes naturais.

A exposição conta com o apoio da Lei Federal de Incentivo à Cultura (Lei Rouanet).

Edo Rocha: Natural de São Paulo, Edo Rocha nasceu em 1949. Ainda jovem iniciou sua formação artística, realizando aos 16 anos sua primeira exposição individual na galeria Ars Mobile.

Durante a adolescência viveu em Salvador (BA), onde estudou pintura no Instituto Cultural Brasil Alemanha e participou de exposições coletivas ligadas ao curso experimental de pintura. De volta a São Paulo, integrou a IX Bienal de São Paulo, em 1967, e retornou à mostra dois anos depois, na X Bienal, em 1969.

Ao longo da década de 1970, recebeu duas vezes o Prêmio de Aquisição Jovem Arte Contemporânea, concedido pelo Museu de Arte Contemporânea da USP. Desde então, construiu uma trajetória que transita entre artes visuais, arquitetura e urbanismo, consolidando-se como uma figura singular no panorama cultural brasileiro.

Serviço

Exposição | Edo Rocha: Arte e Arquitetura

De 06 de maio a 20 de julho

Terça a domingo, das 10h às 19h (entrada até 18h)

Período

Local

OCA

Pavilhão Lucas Nogueira Garcez, Parque Ibirapuera – São Paulo, SP

Detalhes

Um amplo mapeamento da produção visual brasileira contemporânea, que articula diferentes linguagens e traz cerca de 130 artistas de todas as regiões do país, ganha forma na exposição Delírio Tropical

Detalhes

Um amplo mapeamento da produção visual brasileira contemporânea, que articula diferentes linguagens e traz cerca de 130 artistas de todas as regiões do país, ganha forma na exposição Delírio Tropical – Recanto no Sesc Pinheiros, com curadoria de Orlando Maneschy e curadoria adjunta de Keyla Sobral. Com cerca de 280 obras, a mostra propõe uma imersão no imaginário de um Brasil plural, múltiplo e em constante reinvenção, compondo um mosaico que vai de imagens históricas a produções contemporâneas e que se voltam às experiências singulares em territórios distintos e entendimentos de mundo.

Delírio Tropical – Recanto constrói uma narrativa que articula diferentes manifestações visuais artísticas — da fotografia ao vídeo, passando por cartazes, lambes, revistas, esculturas, pinturas e objetos. A mostra propõe uma reflexão sobre o papel das imagens na construção do entendimento do país e de suas múltiplas identidades, a partir de uma curadoria que reúne artistas comprometidos com suas experiências, territórios e modos de existência, incluindo perspectivas culturais das várias regiões do país, ao lado de uma diversidade de vozes contemporâneas.

O conjunto expositivo inclui obras de artistas como Anna Maria Maiolino, Ayrson Heráclito, Cildo Meireles, Claudia Andujar, Dalton Paula, Denilson Baniwa, Hal Wildson, Índigo Braga, Rosângela Rennó e Karim Aïnouz.